Lesson overview

you knew the initial conditions of the entire universe (meaning that you know the initial positions and momenta of every particle in the universe) you could predict the entire past and future of the whole universe with infinite precision. But during the nineteenth-century, mathematicians studying the three-body problem—a problem which alluded Newton—concluded that the behavior of the solar system becomes completely unpredictable after sufficiently long periods of time. The reason why this was the case was because of something known as sensitivity to initial conditions. In the 1950s, an MIT professor named Edward Lorenz discovered that the weather, like the solar system, loses all predictability after a sufficient length of time (about one month). This lead to the birth of chaos theory. But, despite the apparent randomness of chaotic phenomena, it turns out that there is an underlying geometric order to such phenomena called fractal geometry. We shall introduce chaos theory and fractal geometry in this lesson.

History

"Chaos - A mathematical adventure It is a film about dynamical systems, the butterfly effect and chaos theory, intended for a wide audience. From Jos Leys, Étienne Ghys and Aurélien Alvarez, the makers of Dimensions, comes CHAOS, a math movie with nine 13-minute chapters."\(^{[1]}\)

Newton’s law of gravity and laws of motion, which he published in his Mathematica Principia, lead to the first grand unification in the history of physics: the unification of terrestrial and celestial phenomena as in fact being the same phenomena—a striking and remarkable result. For millennia, people believed the teachings of Aristotle that terrestrial and celestial phenomena were separate; but Newton showed that they were actually the same. Johannes Kepler analyzed all of Tycho Brahe’s mess of data to empirically derive his three laws of planetary motion which described the motions of all celestial bodies, not just planets; and Galileo Galilei formulated the law of fall, also empirically, by observing stones of different weight descend down an inclined plane. The law of fall, (\(Δy∝Δt^2\)), described the motion of all terrestrial objects here on Earth (such as flying cannon balls). But Isaac Newton, by writing down \(\vec{F}_g=\sum\vec{F}\), demonstrated that just one force—the force of gravity—is responsible for all terrestrial and celestial motions. The same force that is responsible for falling apples and the trajectories of cannonballs is also responsible for the motions of the Moon and planets. They are in fact the same phenomena: an apple falls due to gravity and traces a straight line, a cannonball falls due to gravity and traces a parabola, and the Moon and planets also fall but trace out circles and ellipses. The consequences of these laws could describe all things in the classical universe. In Newton’s Principia, he proceeded to work out the myriad of consequences of these laws: such as Kepler’s laws which described the motions of the planets; the tides of the sea caused by the Moon’s gravity; the parabolic motions of falling terrestrial objects.

Eventually, Leonard Euler and Joseph Lagrange generalized Newton’s second law into the Euler-Lagrange equation which could describe the motions of deformable systems such as double pendulums and vibrating strings. Pierre-Simon Laplace and Simeon-Denis Poisson generalized Newton’s law of gravity to describe more complicated gravitational force functions. But these were merely different, albeit more general, formulations of the same branch of physics concerned with the same range of physical phenomena: classical mechanics. These scientists used these laws and also had enormous success had describing the motions and behavior of many classical systems. But even more than all of these “practical applications,” classical mechanics could in theory be used to predict everything in the classical universe. Newton took the ideas developed by Democritus and Lucretius and modeled the universe as a myriad of tiny, classical, billiard balls. Given the initial conditions (the initial positions and momenta) of all the billiard balls in the universe, Newton could use his second law to predict, with infinite precision, the entire past or future motions \(\vec{R}_i(t)\) of all these billiards for all values of time. Since he could predict, exactly and with perfect precision, the motions of all these billiards, he would also know, exactly, the entire past and future of all macroscopic objects in the universe. You could know everything.

Classical mechanics described a “clockwork universe” where, given the initial conditions of a system, the entire future of that system could be deterministically understood with arbitrarily high precision. It was therefore unsurprising that Laplace, in summary of the views of nearly all the scientists of his time, enunciated:

We may regard the present state of the universe as the effect of its past and the cause of its future. An intellect which at a certain moment would know all forces that set nature in motion, and all positions of all items of which nature is composed; if this intellect were also vast enough to submit these data to analysis, it would embrace in a single formula the movements of the greatest bodies of the universe and those of the tiniest atom; for such an intellect nothing would be uncertain and the future just like the past would be present before its eyes.

To summarize that eloquent statement: according to classical mechanics, if you give me the initial conditions, I can use Newton’s laws (or the generalizations of them such as the Euler-Lagrange equation) to turn the mathematical crank and determine the entire past and future of the entire universe with arbitrarily high precision. Such an intellect would be vast indeed!

Now, there was one phenomena which had stumped Newton and which he could not explain using classical mechanics. This is the infamous three-body problem: how do three particles (i.e. the Sun, Earth, and Moon) move under only the force of gravity? The problem seemed simple and straightforward at first, but it quickly became a perplexing mess. Newton once said that “such a problem exceeds the force of any human intellect.” But more than one century later, Henry Poincare visited the problem. The King of Norway and Sweden was offering a very lucrative prize to anyone who could crack the puzzle. Poincare knew, as had everyone else, that calculus is the universal language of motion—and the general character of calculus is that a small change in an initial condition (i.e. the position or momentum of the Moon) would produce a small change in an object’s (i.e. the Moon) motion. When Poincare was writing up his paper to send to the King, in his mathematical proof he made precisely this assumption. When one of the editor’s received his paper, he noted that this assumption was unjustified since it did not follow from the mathematics. Unfortunately for Poincare, his paper got published and distributed anyways. He was extremely worried that this would ruin his reputation. But Poincare revisited the problem, wrestled with it for a little while, and long-story short arrived at the following conclusion:

It may happen that small differences in the initial conditions produce very great ones in the final phenomena. A small error in the former will produce an enormous error in the latter. Prediction becomes impossible.

And in that statement was the birth of a new branch of mathematics and science: chaos theory. The signature of chaos is that if one plugs in two different initial conditions into their equations (say, for example, Newton’s second law), even if they differ by just a minuscule and preposterously small amount, the two predictions (i.e. the two different trajectories predicted from Newton’s second law) will start off almost the same but, after a long enough time, they will differ substantially. This is known as sensitivity to initial conditions or the so-called butterfly effect. Any system which exhibits the butterfly effect (that is to say, it exhibits wildly different behaviors from even just a minuscule change in initial conditions) is called a complex system.

But what if you always measured the exact initial condition of a system? If you could do that, then whenever you plugged those initial conditions into your equation the predictions would always turn out to be the same. For a long time, people hoped that Newton’s clockwork universe could still be salvaged by just making arbitrarily precise measurements; but even this hope got shattered during the first quarter of the twentieth century with the discovery of the laws of quantum mechanics. Werner Heisenberg discovered that in order for the laws of quantum mechanics to be consistent, it must follow that it is physically impossible to simultaneously measure the position and momentum of a particle. What this means is that on the most fundamental level, you can measure the state of an atom or very small particle with only finite precision; therefore, you can only measure the position or momenta of macroscopic objects with finite precision. Thus, there will always be error associated with any physical measurement. Therefore, if you imagine measuring the initial conditions of identical systems in many experiments, each of those measurements will always be off by a little (within the error) and, therefore, if you try to use equations to predict their future behavior, each prediction will be radically different. Essentially, after sufficiently long intervals of time, predictability is lost.

What Poincare discovered was that our solar system is a chaotic system and that predicting the future state of our solar system at a sufficiently long time in the future is impossible. This point in history represented a radical departure from the old, Newtonian worldview of a clockwork universe in which, using the method of reductionism (by analogy, understanding the components of the clockwork), we could deterministically predict the behavior and future of the universe. In the next couple of sections in this article, we'll be looking at three other examples of chaotic systems: weather, a game of dice, and electrical currents. We'll also explore the deep and mind-blowing relationship between chaos theory and fractal geometry.

Atmospheric phenomena such as the weather is chaotic

"Chaos - A mathematical adventure It is a film about dynamical systems, the butterfly effect and chaos theory, intended for a wide audience. From Jos Leys, Étienne Ghys and Aurélien Alvarez, the makers of Dimensions, comes CHAOS, a math movie with nine 13-minute chapters."\(^{[2]}\)

Figure 1: "Edward Norton Lorenz (May 23, 1917 – April 16, 2008) was an American mathematician, meteorologist, and a pioneer of chaos theory, along with Mary Cartwright. He introduced the strange attractor notion and coined the term butterfly effect."\(^{[3]}\) This image is for educational purposes only. Image credit: https://history.aip.org/history/Thumbnails/lorenz_edward_a1.jpg

Edward Lorenz used a very simple system of differential equations to model the weather. He treated the atmosphere as a fluid and used the variables \(x\), \(y\), and \(z\) to represent the temperature of this fluid and the speed at which the convection rotated at any given moment in time. While working at MIT, he used a computer to crank out calculations with his equations. The initial conditions he plugged into his equation were rounded to six decimal places. The computer performed these calculations and predicted the weather a month into the future. He performed these same calculations again, except this time he plugged in initial conditions which were rounded to three decimal places. He then left his computer to go get a cup of coffee. When he came back, he noticed that both calculations predicted the same weather for a short amount of time into the future—something which we would expect from Newtonian mechanics. But he noticed that after a long enough period of time, these two predictions—which started off almost completely identical—began to diverge from one another wildly. Weather does in fact behave like the Lorenz equations. When we measure the initial conditions associated with the temperature and convection of the atmosphere, there is an error involved in that measurement as there is in any physical measurement. This error is small and doesn’t affect the predictions of the Lorentz equations within a time period of about a week. After a week, however, the range of possible initial conditions within that small error start to produce noticeably different predictions and after about a month those predictions become wildly different. In the words of Edward Lorentz,

Two states differing by imperceptible amounts may eventually evolve into two considerably different states ... If, then, there is any error whatever in observing the present state—and in any real system such errors seem inevitable—an acceptable prediction of an instantaneous state in the distant future may well be impossible....In view of the inevitable inaccuracy and incompleteness of weather observations, precise very-long-range forecasting would seem to be nonexistent.

Chaos in a game of dice

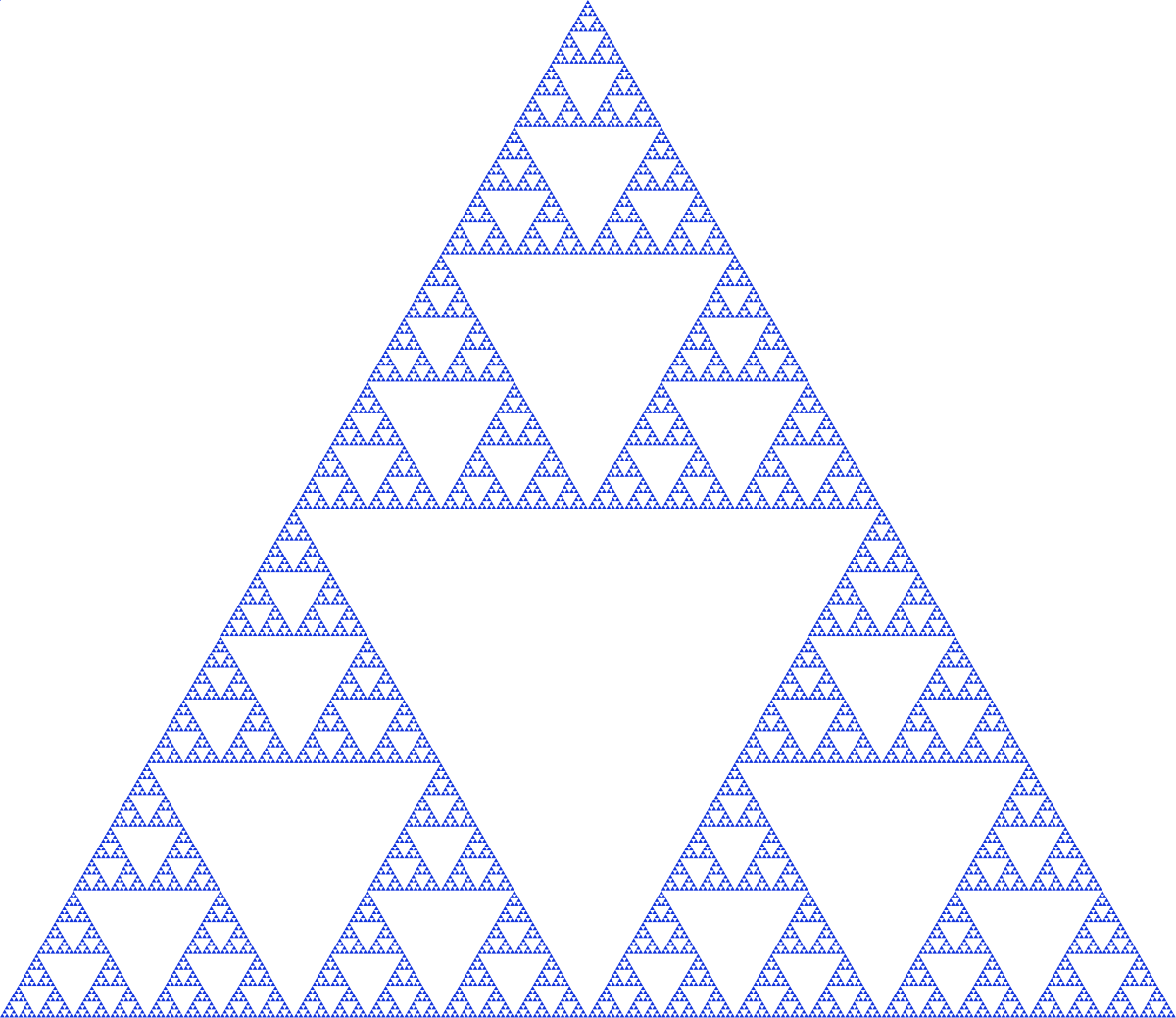

Figure 2: The three points \((1,2)\), \((3,4)\), and \((5,6)\) form an equilateral triangle. Choose any arbitrary point \((x,y)\) anywhere on the plane either inside or outside the triangle. If you rolled a 1 or 2, then draw a new point \((x_0,y_0)\) whose distance is half way between the points \((x,y)\) and \((1,2)\). Repeat this many times.

There is a fundamental connection between chaos theory and fractal geometry. The roll of the dice on a very rigid table is indeed chaotic. But analogous to the way neurons fire in your head, there is an underlying order and structure to that apparent randomness. That underlying order and structure are the patterns of fractal geometry. For example, suppose that you went through the following procedure: choose a point \((x,y)\) at random as in 2; then roll the dice on a rigid table; if you got say, a 1 or 2, then draw a line half way between the points \((1,2)\) and \((x,y)\) until you get to the new point \((x_0,y_0)\); then roll the dice again and if you got say, a 3 or 4, then draw a line half way between the points \((x_0,y_0)\) and \((3,4)\) until you got to your new point \((x_1,y_1)\); repeat. If you repeated this procedure billions of times, common sense tells you that you’d just get a random mess of points everywhere. But the distribution of points that you would actually get is a particular kind of fractal as shown in Figure 2. The apparent randomness of this chaos (that is, the outcomes of the roll of the dice) has an underlying structure which resembles a fractal. Remarkable! You could zoom in, infinitely, on one of the portions containing triangles and the same patterns and shapes would continue to reappear—this property is known as self-similarity. What is truly mind-blowing though is that these beautiful fractal patterns can describe events in the physical world.

Figure 3: After rolling the dice (and drawing a new point for each dice roll) billions of times, a fractal pattern known as the Siepinski triangle will eventually form. Remarkable! Image credit: by Beojan Stanislaus (Own work) [CC BY-SA 3.0 (https://creativecommons.org/licenses/by-sa/3.0)], via Wikimedia Commons.

Chaos in electrical transmission

The mathematical physicist Benoit Mandelbrot, while working at IBM, was asked to solve a problem which had perplexed engineers. Now, computers “communicate” with each other by sending information encoded in electrical signals. The problem which was posed to Mandelbrot was as follows: how was it that, no matter what engineers did, there were always apparently random errors occurring in the signals? Mandelbrot’s natural bent towards geometry and patterns as a child gave him a fresh and unique perspective on the problem. He noticed that there would be an hour of electric transmission with no errors and, in the next hour, electric transmission which did have errors. If he took the hour containing no errors and subdivided it into smaller and smaller times intervals, he did not uncover any additional errors. But if he took the other hour (the one containing errors) and subdivided it into two 30 minute intervals, he would find that one of those intervals contained no errors whereas the other one did. If he them took the 30 minute interval containing errors, subdivided it into two 15 minute intervals, again he would find that one of those intervals contained errors while one of them did not. Mandelbrot thus discovered that these errors occurred in clusters which had an underlying pattern to them. Indeed, Mandelbrot could had kept subdividing the time intervals containing errors arbitrarily many times and this pattern would have kept re-emerging. This particular pattern, although unknown to the engineers working at IBM, was known by the specialized mathematician to be Cantor’s set.\(^{[4]}\)

Cantor’s set can be obtained through the following procedure: take a line segment, subdivide it into three pieces, and then throw the middle piece away; then take the two line segments on the left and right, subdivide each of them into three pieces (so you have six pieces in total), and then throw away the two middle pieces associated with the segments on the left and right (so that you now have four pieces in total); if you continue this iterative process and infinite number of times, then you’ll obtain Cantor’s set as shown in the illustration below. Cantor’s set is a fractal—exhibiting self-similarity at all size scales like in the fractal in the last example. The physical and fundamental reason why a fractal pattern has popped up is because the outcome (that is, the strands of 1s and 0s) of the electrical signals is chaotic (like the outcome of the dice roll)—sensitive to initial conditions. Thus, Mandelbrot had uncovered the physical and fundamental origin of the, seemingly, randomly occurring errors in the electrical signals: these errors were an inevitable consequence of the electrical signals exhibiting chaotic behavior. It is therefore physically impossible to completely remove these errors.

Figure 4: An image of the Cantor set.

This article is licensed under a CC BY-NC-SA 4.0 license.

References

1. It's so blatent. "Chaos | Chapter 1 : Motion and determinism - Panta Rhei". Online video clip. YouTube. YouTube, 22 April 2016. Web. 05 November 2017. Retrieved from https://www.youtube.com/watch?v=c0gDLEHbYCk&t=1s

2. It's so blatent. "Chaos | Chapter 7 : Strange Attractors - The butterfly effect". Online video clip. YouTube. YouTube, 22 April 2016. Web. 05 November 2017. Retrieved from https://www.youtube.com/watch?v=aAJkLh76QnM

3. Edward Norton Lorenz. (2017, October 27). In Wikipedia, The Free Encyclopedia. Retrieved 15:56, November 5, 2017, from https://en.wikipedia.org/w/index.php?title=Edward_Norton_Lorenz&oldid=807378289

4. Sardar, Ziauddin, and Iwona Abrams. Introducing Chaos: a Graphic Guide. Icon Books, 2013.